Introduction

AWS Lambda changed how we think about backend compute when it launched in 2014. Twelve years on, the service has matured enormously — but one constraint has remained stubbornly fixed: functions are expected to be short-lived and stateless. Any workflow that needed to span minutes, hours, or await human input required bolting on external machinery — DynamoDB checkpointing tables, Step Functions state machines, SQS retry loops, or Temporal clusters running on EC2. All of that works, but it adds operational weight that erodes the original serverless value proposition.

Lambda Durable Functions, announced at AWS re:Invent 2025 and reaching general availability in early 2026, changes the equation fundamentally. It lets you write multi-step, long-running, stateful logic directly inside a Lambda function handler — in sequential imperative code, in Python, TypeScript, or JavaScript — while the platform transparently handles checkpointing, failure recovery, and cost-efficient pausing during wait periods. No external state store. No orchestration service. No idle compute charges while waiting for a webhook or human approval.

This article is a deep technical examination of how Durable Functions work, when they are the right architectural choice, and how to deploy them with production-grade reliability. We cover the checkpoint-and-replay engine, the steps and waits primitives, AI agent orchestration patterns, comparison with AWS Step Functions, cost modelling, observability, and the operational constraints you need to plan around before going to production.

The Statefulness Problem in Serverless Architecture

To appreciate why Durable Functions matter, it helps to understand the problem space they solve. Consider a straightforward e-commerce order fulfillment workflow: validate payment credentials, reserve inventory, wait up to 72 hours for fraud review, trigger shipment if approved or issue a refund if rejected, then notify the customer. On paper, five steps. In a Lambda-based microservices architecture, this becomes a distributed systems coordination challenge involving at least three queues, two DynamoDB tables, a Step Functions state machine with six states, and carefully designed idempotency keys to prevent double-charges on retries.

The cognitive and operational overhead is significant. Engineers building these systems spend more time reasoning about retry semantics, dead-letter queue routing, and checkpoint consistency than on the actual business logic. When a Lambda function times out mid-workflow — after debiting the customer but before reserving inventory — the recovery path must be explicitly designed, tested, and monitored. For small teams or high-velocity feature development, this is a meaningful tax on engineering throughput.

The Durable Execution programming model addresses this by making the runtime responsible for fault tolerance rather than the developer. Your code expresses intent sequentially; the infrastructure ensures that intent is carried out exactly once, across however many retries, restarts, or suspensions are required.

What Lambda Durable Functions Actually Are

Lambda Durable Functions are not a new compute primitive — they are a new programming model layered on top of the existing Lambda execution environment. You enable the feature at function creation time, include the open-source Durable Execution SDK in your deployment package, and structure your handler around the SDK’s primitives. The Lambda runtime, AWS infrastructure, and an AWS-managed journal handle the rest.

The feature is built on the concept of durable execution, a pattern pioneered by workflow engines like Microsoft’s Durable Functions for Azure, Temporal, and Cadence. The fundamental idea is that a workflow’s execution history is the source of truth, not the in-memory state of a running process. Progress is checkpointed to a journal after each logical step. If the function or underlying host fails, the workflow resumes by replaying the execution history from the journal — fast-forwarding through completed steps and re-executing only the steps that had not yet succeeded.

The Checkpoint-and-Replay Engine: Under the Hood

Understanding the replay mechanism is essential before writing production Durable Functions code, because it shapes how you reason about side effects, timing, and external interactions.

When a Durable Function executes for the first time, it runs sequentially top-to-bottom. Each time it reaches a step() call, the SDK executes the provided function, waits for the result, and atomically checkpoints that result to the AWS-managed journal before proceeding. If the Lambda function is interrupted at any point — timeout, crash, or deliberate suspension via a wait() call — the journal preserves everything that completed.

On the next invocation (triggered by the runtime when the function is resumed or retried), the function handler runs from line one again. As it encounters each step() call, the SDK checks the journal: has this step already completed? If yes, it returns the recorded result immediately without re-executing the step’s logic. The handler effectively fast-forwards through history. Only the step that was interrupted executes again.

random.uuid4(), datetime.now(), or any other non-deterministic operation directly in the handler or inside replayed steps. The SDK provides replay-safe alternatives: dc.uuid(), dc.now(). Violating this constraint causes divergence between the original execution and the replay, leading to silent data corruption or incorrect workflow behaviour.sequenceDiagram

participant H as Lambda Handler

participant SDK as Durable Execution SDK

participant J as AWS-Managed Journal

participant E as External System

Note over H,J: First execution

H->>SDK: step("validate-payment", fn)

SDK->>E: Execute fn() - call payment API

E-->>SDK: Result: {txn_id: "T-9921"}

SDK->>J: Checkpoint step result

H->>SDK: wait("fraud-review", 72h)

SDK->>J: Record WAIT state

Note over H,J: Function suspended - zero compute billing

Note over H,J: Resumed after fraud team approves

H->>SDK: step("validate-payment", fn)

SDK->>J: Journal has result - SKIP re-execution

SDK-->>H: Return {txn_id: "T-9921"} from journal

H->>SDK: step("fulfill-order", fn)

SDK->>E: Execute fn() - trigger shipment

E-->>SDK: Result: {shipment_id: "SHP-441"}

SDK->>J: Checkpoint step result

H-->>H: Return final resultCore Primitives: steps, waits, and Replay-Safe Utilities

The Durable Execution SDK exposes a small, composable API surface. Mastering these two primitives and the replay-safe utilities covers 95% of production use cases.

The steps Primitive

A step wraps any callable — a function, a lambda, a coroutine — making it automatically retried on failure and checkpointed on success. Steps are the atomic unit of durable execution. Each step is identified by a string name that must be unique within the workflow. This name becomes the journal key for checkpoint lookup during replay.

import boto3

import json

from durable_execution import DurableContext

def handler(event, context):

dc = DurableContext(context)

# Each step has a unique name, a callable, and optional retry config

payment = dc.step(

name="validate-payment",

fn=lambda: call_payment_service(event["card_token"], event["amount_cents"]),

retry_config={

"max_attempts": 5,

"initial_interval_seconds": 2,

"backoff_coefficient": 2.0, # Exponential backoff

"max_interval_seconds": 30,

"non_retryable_errors": ["CardDeclinedError", "InvalidCardError"]

}

)

# Steps can depend on results of prior steps

inventory = dc.step(

name="reserve-inventory",

fn=lambda: reserve_stock(

sku=event["sku"],

quantity=event["qty"],

reservation_ref=payment["txn_id"] # Use prior step result

)

)

return {

"status": "reserved",

"txn_id": payment["txn_id"],

"reservation_id": inventory["reservation_id"]

}

The waits Primitive

A wait suspends the function execution until an external signal arrives or a timeout expires. Crucially, no compute resources are consumed during the wait period. The function is fully de-allocated. When the signal arrives — via a callback URL, an SQS message, an SNS notification, or an SDK API call — Lambda resumes the function from the checkpoint immediately preceding the wait.

def order_workflow(event, context):

dc = DurableContext(context)

dc.step("charge-payment", lambda: charge_card(event["card_token"], event["total"]))

# Suspend for up to 72 hours - zero compute cost during wait

# The wait returns whatever payload the external system sends on resume

fraud_decision = dc.wait(

name="fraud-review-approval",

timeout={"hours": 72},

on_timeout="reject" # If timeout expires, resume with this signal name

)

if fraud_decision.signal_name == "reject":

dc.step("issue-refund", lambda: refund_payment(event["card_token"], event["total"]))

return {"status": "rejected", "reason": "fraud_timeout_or_rejection"}

# Approved - continue fulfillment

dc.step("fulfill-order", lambda: trigger_fulfillment(event["order_id"]))

dc.step("send-confirmation", lambda: send_email(event["customer_email"]))

return {"status": "fulfilled"}

# In your fraud review system - send approval signal to resume the workflow

def approve_fraud_review(execution_id: str, reviewer: str):

import boto3

lambda_client = boto3.client("lambda")

lambda_client.invoke(

FunctionName="order-workflow",

InvocationType="Event",

Payload=json.dumps({

"_durable_signal": {

"execution_id": execution_id,

"signal_name": "approve",

"payload": {"reviewer": reviewer, "approved_at": dc.now().isoformat()}

}

})

)

AI Agent Orchestration: The Killer Use Case for 2026

The most compelling production use case for Lambda Durable Functions in 2026 is AI agent orchestration. Agentic AI systems — LLM-based agents that iteratively call tools, accumulate conversation context across multiple turns, escalate to human reviewers, and retry on reasoning failures — map almost perfectly onto the Durable Execution model.

Traditional approaches to agentic AI pipelines require either keeping a process alive for the full agent execution (expensive and fragile) or implementing custom checkpointing (error-prone). Durable Functions provide a clean, infrastructure-managed solution: each LLM call and each tool execution is a checkpointed step. If the Lambda times out mid-agent-loop, the next invocation replays from the last checkpoint and skips the completed tool calls.

import boto3, json

from durable_execution import DurableContext

bedrock = boto3.client("bedrock-runtime", region_name="us-east-1")

TOOL_DEFINITIONS = [

{"name": "search_knowledge_base", "description": "Query internal knowledge base"},

{"name": "execute_sql", "description": "Run read-only SQL against data warehouse"},

{"name": "send_report_email", "description": "Send formatted report to stakeholders"},

]

def ai_analyst_agent(event, context):

dc = DurableContext(context)

task = event["task"]

max_iters = event.get("max_iterations", 12)

conversation = [{"role": "user", "content": task}]

for i in range(max_iters):

# Each LLM call is a checkpointed step - replays skip completed calls

llm_out = dc.step(

name=f"llm-iter-{i}",

fn=lambda msgs=list(conversation): bedrock.invoke_model(

modelId="anthropic.claude-3-5-sonnet-20241022-v2:0",

body=json.dumps({

"messages": msgs,

"tools": TOOL_DEFINITIONS,

"max_tokens": 4096,

"temperature": 0.1

})

)

)

body = json.loads(llm_out["body"].read())

if body["stop_reason"] == "end_turn":

return {

"result": body["content"][0]["text"],

"iterations": i + 1,

"escalated": False

}

# Execute each tool call as its own checkpointed step

tool_results = []

for tool_call in [b for b in body["content"] if b["type"] == "tool_use"]:

result = dc.step(

name=f"tool-{tool_call['name']}-iter-{i}",

fn=lambda tc=tool_call: dispatch_tool(tc["name"], tc["input"]),

retry_config={"max_attempts": 3}

)

tool_results.append({

"type": "tool_result",

"tool_use_id": tool_call["id"],

"content": json.dumps(result)

})

conversation.append({"role": "assistant", "content": body["content"]})

conversation.append({"role": "user", "content": tool_results})

# Max iterations reached - escalate to human analyst

human_input = dc.wait("human-escalation", timeout={"hours": 48})

return {

"result": human_input.payload.get("analyst_response", "No response"),

"escalated": True

}

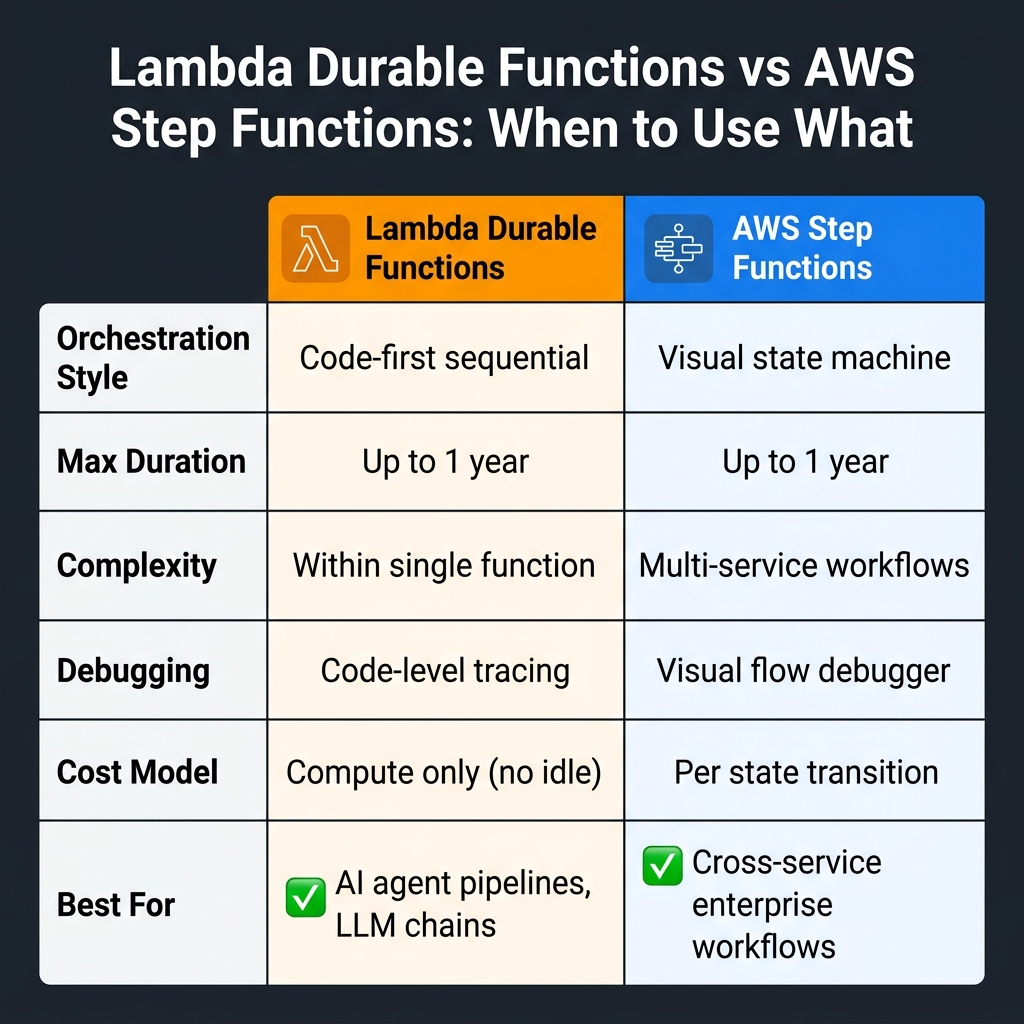

Lambda Durable Functions vs. AWS Step Functions: The Complete Decision Framework

The most common architectural question teams ask when evaluating Durable Functions is how it compares to AWS Step Functions. The answer is nuanced: these are complementary services, not competing ones. The right choice depends on three axes — orchestration scope, team skillset, and debugging preferences.

Choose Lambda Durable Functions when: your entire workflow logic lives within a single function’s codebase, you want code-first workflow definition without a separate JSON/YAML state machine, your team is fluent in Python or TypeScript and prefers debuggable unit-testable code, and your workflows involve tight loops with variable iteration counts (like AI agent loops).

Choose AWS Step Functions when: your workflow orchestrates multiple distinct AWS services (ECS tasks, SageMaker endpoints, Glue jobs, DynamoDB operations) as first-class participants, not just API calls from inside Lambda; when you need the visual workflow designer for stakeholder communication; when you require the 200+ SDK integrations that allow Step Functions to call services directly without Lambda intermediaries; or when your compliance posture requires an externally auditable state machine definition.

flowchart TD

A["New Workflow Requirement"] --> B{"Multi-service orchestration?"}

B -->|"Yes: ECS, Glue, SageMaker as direct steps"| C["AWS Step Functions"]

B -->|"No: logic lives within one function"| D{"Long-running or stateful?"}

D -->|"Yes: approvals, AI agent loops"| E["Lambda Durable Functions"]

D -->|"No: sub-15 min stateless"| F["Standard Lambda"]

C --> G["Visual Workflow Designer\nPer-state-transition pricing"]

E --> H["Code-first sequential logic\nCompute-only pricing"]

F --> I["Simple event handler\nLowest overhead"]Error Handling, Retries, and Idempotency at Scale

Production durable workflows demand careful thought about failure modes. The SDK’s retry configuration handles transient failures (network timeouts, throttling, temporary unavailability), but non-transient failures — invalid inputs, permanent service errors, business rule violations — require explicit handling in your step logic.

from durable_execution import DurableContext, StepError

class InsufficientInventoryError(Exception):

"""Non-retryable business logic error"""

pass

def fulfillment_workflow(event, context):

dc = DurableContext(context)

try:

inventory = dc.step(

name="reserve-inventory",

fn=lambda: reserve_stock_with_validation(event["sku"], event["qty"]),

retry_config={

"max_attempts": 4,

"non_retryable_errors": [

"InsufficientInventoryError", # Don't retry stockouts

"InvalidSKUError"

]

}

)

except StepError as e:

if e.cause_type == "InsufficientInventoryError":

# Compensate - notify customer, release payment hold

dc.step("notify-stockout", lambda: send_stockout_notification(event))

dc.step("release-payment-hold", lambda: void_authorization(event["auth_code"]))

return {"status": "cancelled", "reason": "stockout"}

raise # Unexpected error - propagate for dead-letter handling

dc.step("finalize-shipment", lambda: book_carrier(inventory["reservation_id"]))

return {"status": "fulfilled"}

Deployment: CDK, SAM, and CloudFormation

Durable Functions must be enabled at function creation time. The feature cannot be retrofitted onto an existing Lambda function. Plan your deployment accordingly — treat Durable Function enablement as an infrastructure change requiring a new function resource, not an in-place update.

# AWS SAM template for Lambda Durable Function

AWSTemplateFormatVersion: '2010-09-09'

Transform: AWS::Serverless-2016-10-31

Resources:

OrderWorkflow:

Type: AWS::Serverless::Function

Properties:

FunctionName: order-workflow-durable

Handler: handler.order_workflow

Runtime: python3.13

Timeout: 900 # Maximum Lambda timeout; waits extend beyond this

MemorySize: 1024

Architectures: [arm64] # Graviton3 - 20% cheaper per GB-second

# Enable Durable Functions

DurableConfig:

Enabled: true

JournalRetentionDays: 90 # How long to keep execution journals

Environment:

Variables:

POWERTOOLS_SERVICE_NAME: order-workflow

LOG_LEVEL: INFO

Policies:

- AWSLambdaBasicExecutionRole

- DynamoDBReadPolicy:

TableName: !Ref OrdersTable

- Statement:

- Effect: Allow

Action: [secretsmanager:GetSecretValue]

Resource: !Sub "arn:aws:secretsmanager:${AWS::Region}:${AWS::AccountId}:secret:payment-*"

Observability and Debugging in Production

Lambda Durable Functions emit structured execution events to CloudWatch Logs with full step names, durations, retry counts, and journal checkpoint metadata. AWS Lambda Powertools for Python integrates natively with Durable Functions to add correlation IDs, structured JSON logging, and X-Ray trace segments per step.

from aws_lambda_powertools import Logger, Tracer

from durable_execution import DurableContext

logger = Logger(service="order-workflow")

tracer = Tracer(service="order-workflow")

@logger.inject_lambda_context(correlation_id_path="headers.x-correlation-id")

@tracer.capture_lambda_handler

def handler(event, context):

dc = DurableContext(context)

with tracer.capture_method("validate-payment"):

payment = dc.step(

name="validate-payment",

fn=lambda: validate_payment(event["card_token"])

)

logger.info("Payment validated", txn_id=payment["txn_id"],

step_duration_ms=dc.last_step_duration_ms)

# dc.execution_id is stable across replays - use as correlation ID

logger.append_keys(execution_id=dc.execution_id)

return {"execution_id": dc.execution_id}

Cost Modelling: When Durable Functions Save Money

The cost profile of Durable Functions is distinctive: you pay for compute only during active step execution. Wait periods — whether 30 seconds or 30 days — cost nothing. Compare this to alternatives:

EC2-based workflow engine (Temporal self-hosted): 24/7 instance costs for the history service, frontend, matching, and worker hosts. For a small cluster: ~$800-1,200/month minimum, regardless of workflow volume.

AWS Step Functions Standard: $0.025 per 1,000 state transitions. A 10-step workflow at 1M executions/month = $250/month in transitions, plus Lambda compute for each state.

Lambda Durable Functions: Lambda compute charges only during active execution. A workflow that processes for 2 seconds and waits 48 hours for human review costs the same as a 2-second-only Lambda invocation — the 48-hour wait is free. At 1M executions/month with 2 seconds active compute per execution on a 1 GB ARM64 function: ~$33.40/month in compute plus the usual Lambda request charges.

Limitations and Constraints You Need to Plan Around

Durable Functions are a powerful addition to the toolkit, but they have real constraints that shape architectural decisions:

New functions only: The feature cannot be enabled on existing Lambda functions. You must create new function resources. Plan blue-green migration for existing workflows.

Supported runtimes at GA: Python 3.12+, Node.js 22+, TypeScript via Node.js. Java and .NET support is on the roadmap but not yet GA.

Step result size: Individual step return values must be serialisable to JSON and are subject to the journal record size limits (currently 256 KB per step result). For larger outputs — large LLM responses, binary data — store the result in S3 and record only the S3 key as the step result.

Cold starts on resume: When a function is resumed after a wait, it incurs a cold start just like any other Lambda invocation. For latency-sensitive resume paths, configure Provisioned Concurrency on the function alias.

Debugging replays locally: The durable_execution SDK provides a local journal implementation for unit testing. Use it extensively — testing replay behaviour in isolation is essential and cannot be adequately done by simply running the handler once.

Key Takeaways

- Durable Execution SDK brings checkpoint-and-replay stateful orchestration natively into Lambda — no external state store, no Step Functions state machine required for single-function workflows

- The

stepsprimitive checkpoints progress and auto-retries with configurable backoff; thewaitsprimitive suspends execution at zero compute cost for hours or days - AI agent loops, multi-step payment flows, and long-running approval processes are the sweet spots — scenarios where the workflow is naturally sequential, code-owned, and interspersed with external waits

- Step Functions remains the right choice for cross-service orchestration with visual state machine definitions and 200+ SDK integrations

- Durable Functions costs dramatically less than EC2-hosted workflow engines and can outperform Step Functions on cost for high-volume workflows with long idle-wait periods

- Plan around the new-function-only constraint, JSON step result size limits, and the need for deterministic handler code before committing architectures to the feature

Discover more from C4: Container, Code, Cloud & Context

Subscribe to get the latest posts sent to your email.